Backing up my homelab with restic and Cloudflare R2

Table of Contents

I run a small homelab on an Ubuntu laptop in the corner of my living room — DNS, VPN, media, a handful of self-hosted services. After my wg-easy DB corrupted itself during a power blip in March, I finally sat down to do this properly — and realized “backup” was hiding three different problems:

- Code — compose files, scripts, host config. Lives in git.

- Secrets — API tokens, SSH keys, bcrypt hashes. Lives in Bitwarden.

- State — SQLite databases, TLS certs, runtime config that services mutate. This is what restic handles.

Keeping the three separate is the whole trick. On disk-dies day, recovery is:

git clone → bw-pull.sh → restic restore → docker compose up

Why restic #

I spent an evening trying to make rsync do this before realizing it doesn’t dedupe. restic was the obvious answer all along — Berkay Bulut’s Offsite Backups Without the Drama was the post that pushed me over the edge. A few reasons it works for me:

- AES-256 at rest. The repo password lives in Bitwarden, not on the box.

- Content-addressed dedup. Nightly snapshots cost kilobytes, not gigabytes.

- Point-in-time restore. If something breaks on Tuesday, Monday’s state is a command away.

The repo sits in Cloudflare R2 — S3-compatible, zero egress fees, already part of my infra. A systemd user timer fires nightly at 04:30 with 15 minutes of jitter.

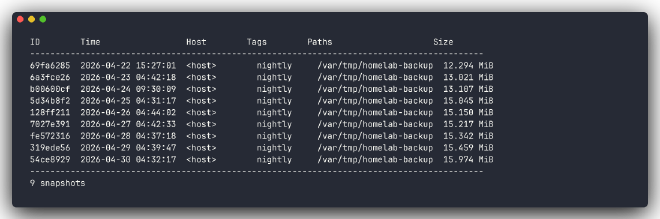

The dedup is real, not marketing copy:

What gets backed up #

Not everything. Only things that are (a) stateful and (b) not regenerable from code or downloads:

| What | How |

|---|---|

| Jellyfin database | SQLite .backup — online-consistent under live writes |

WireGuard wg0.conf |

plain copy — server private key + peer keys |

Traefik acme.json |

docker exec cat — Let’s Encrypt certs |

AdGuard AdGuardHome.yaml |

plain copy — upstreams, filters, persistent clients |

Query logs, thumbnail caches, transcoded media: all skipped. Regenerable or too big to care about.

The lesson: don’t just cp a running database #

My first instinct was cp jellyfin.db /backup/. Then I read the SQLite docs.

A plain copy of a live SQLite file has no consistency guarantee. If the service is mid-write when the copy happens, you get a file that looks fine but restores into corruption — and you won’t find out until you actually need it.

SQLite ships an online backup API — sqlite3 src.db ".backup target.db" — that takes a consistent snapshot while the service keeps writing. That’s what my script uses now, for every database.

The second lesson: test the failure path #

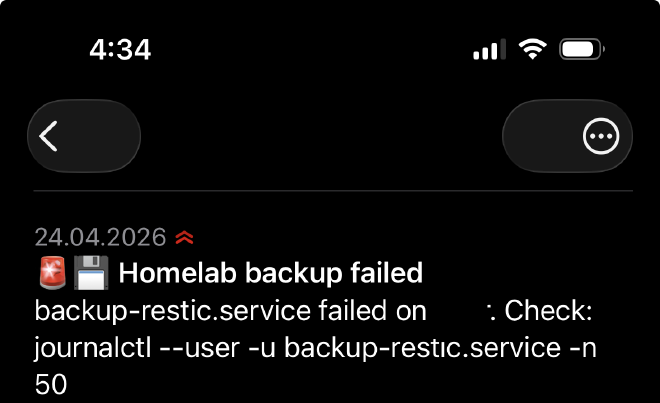

Nightly timers are silent by default. They work until they don’t, and you find out three weeks later when you actually need a restore.

I wired an OnFailure= hook to the systemd unit that pushes a notification to ntfy.sh when the backup fails. Phone pings me, I check journalctl, fix it in the morning.

Retention #

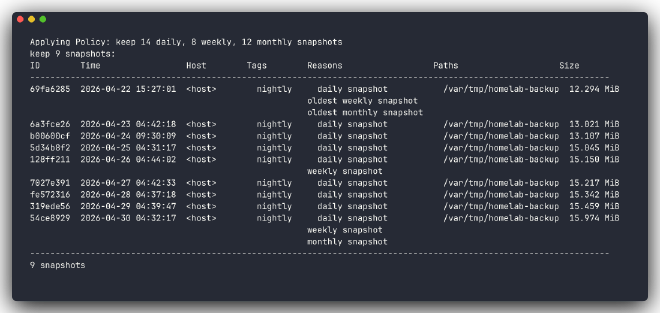

14 daily, 8 weekly, 12 monthly. restic forget --prune enforces it at the end of every run. Repo stays bounded without my help.

forget --prune --dry-run showing which snapshots get kept and why.

Still on the list #

No object-lock on the R2 bucket yet. If the host is compromised and the attacker has the restic password, they can forget --prune the whole repo. A second push to an immutable target (Backblaze B2 with Object Lock) is the next step.

Monthly spot-restore, quarterly full drill. A backup that hasn’t been restored is not a backup.

If you want the actual commands — bucket setup, systemd unit files, the staging script, the restore command — I’ve written that up in the companion setup guide.